Tech

Tech

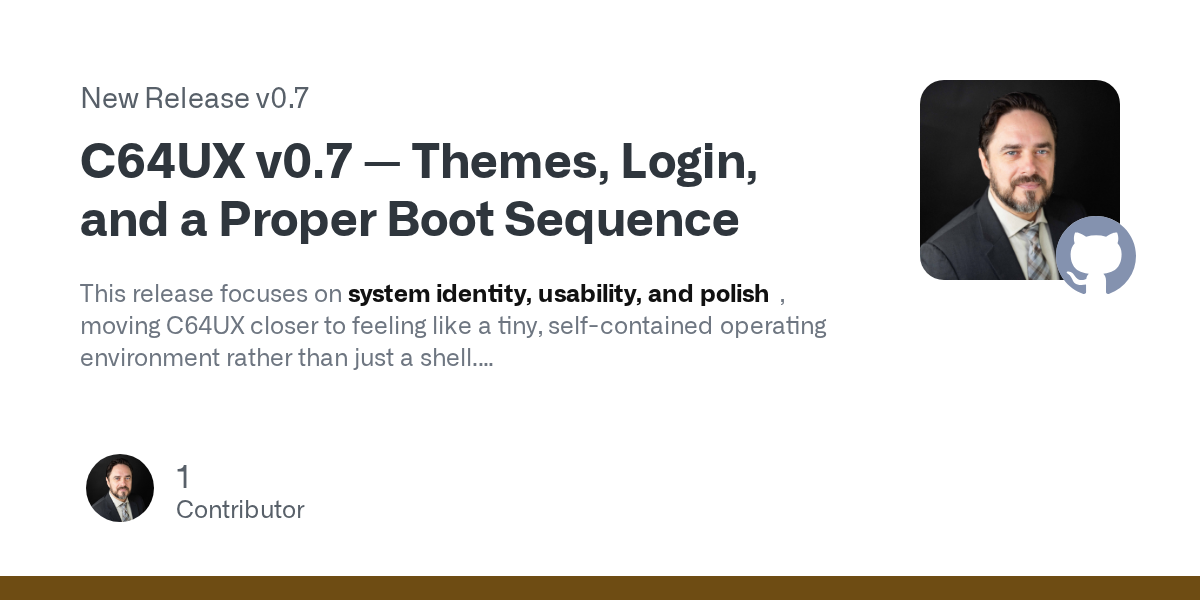

Show HN: ZSE – Open-source LLM inference engine with 3.9s cold starts

I've been building ZSE (Z Server Engine) for the past few weeks — an open-source LLM inference engine focused on two things nobody has fully solved together: memory efficiency and fast cold starts. The problem I was trying to solve: Running a 32B mod